Can’t use Microsoft Foundry because of compliance or an Azure bill that doubles during an AI development spike, but still want the best AI debugging experience in Aspire? Here’s how to keep the full GenAI chat-log sparkles while Ollama and Gemma 4 run locally.

If you’ve used the Foundry or Azure OpenAI integration in Aspire, you know the part I mean — the GenAI visualiser that shipped in Aspire 9.5 (September 2025) and has kept gaining polish since.

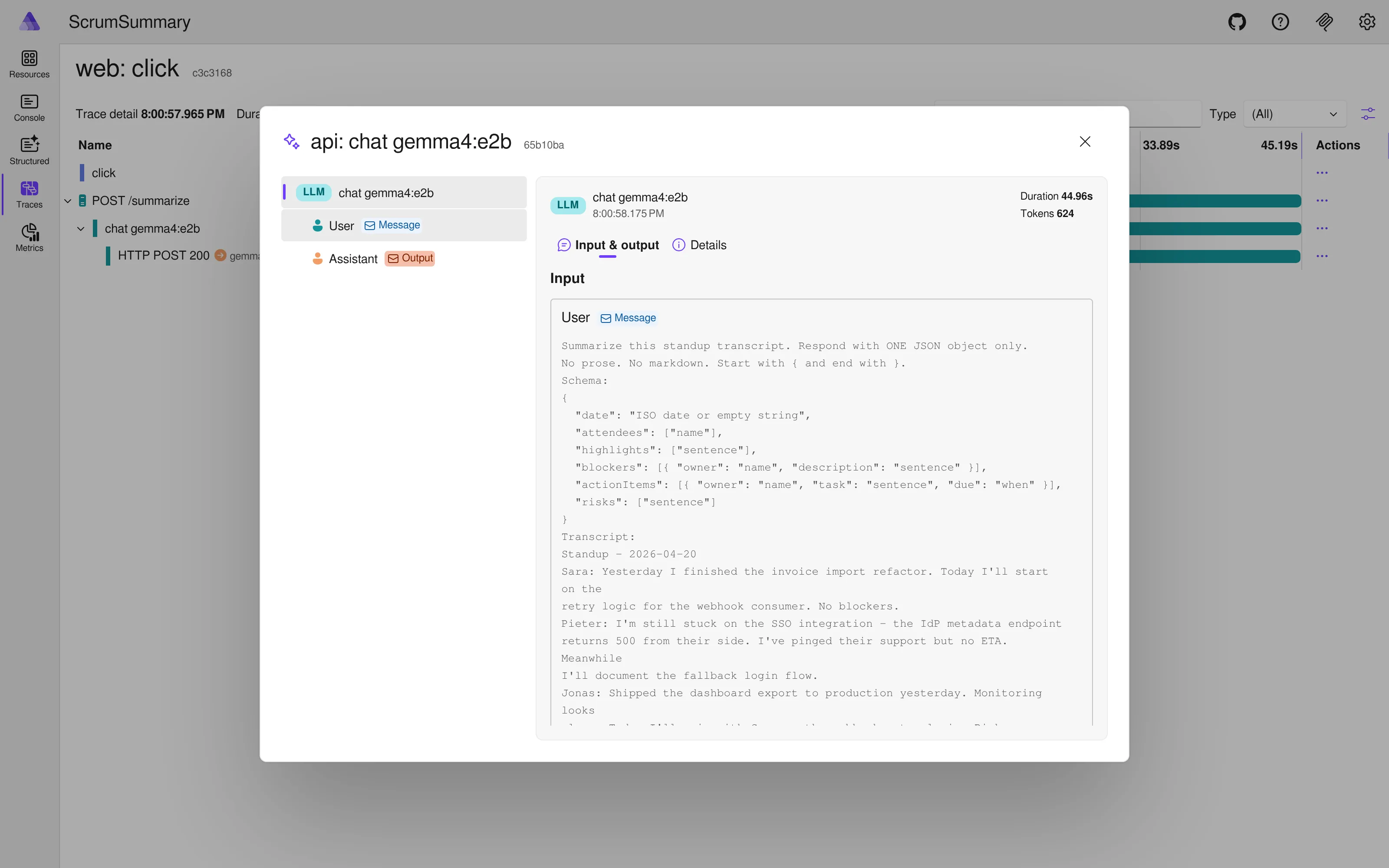

Click a trace, scroll to the LLM call, and a small sparkles icon appears next to the span. Click the sparkles and the dashboard opens a chat log: system prompt, user message, assistant response, tool calls, token counts, finish reason. Everything you need to reason about what the model actually did, right next to the code path that produced it. No log-dumping, no side-channel inspector.

At first glance that view looks like a Foundry-exclusive perk. It isn’t. The dashboard doesn’t check gen_ai.system == "openai" or phone home to Azure. It keys off the OpenTelemetry GenAI semantic conventions, so any backend that emits gen_ai.* spans with the right shape gets the same treatment. Ollama, LM Studio, llama.cpp; if it speaks OpenAI-compatible chat completions, it can light up the same popup.

The plan

You just have to wire four things yourself. The rest of this post walks through those four things using Ollama and the new Gemma 4 E2B model as the concrete example. The shape generalises to anything else you run locally.

Quick aside: Gemma 4 ships in a few sizes. The smaller ones run on a laptop; the larger ones want real GPU memory. Pick whichever fits your hardware.

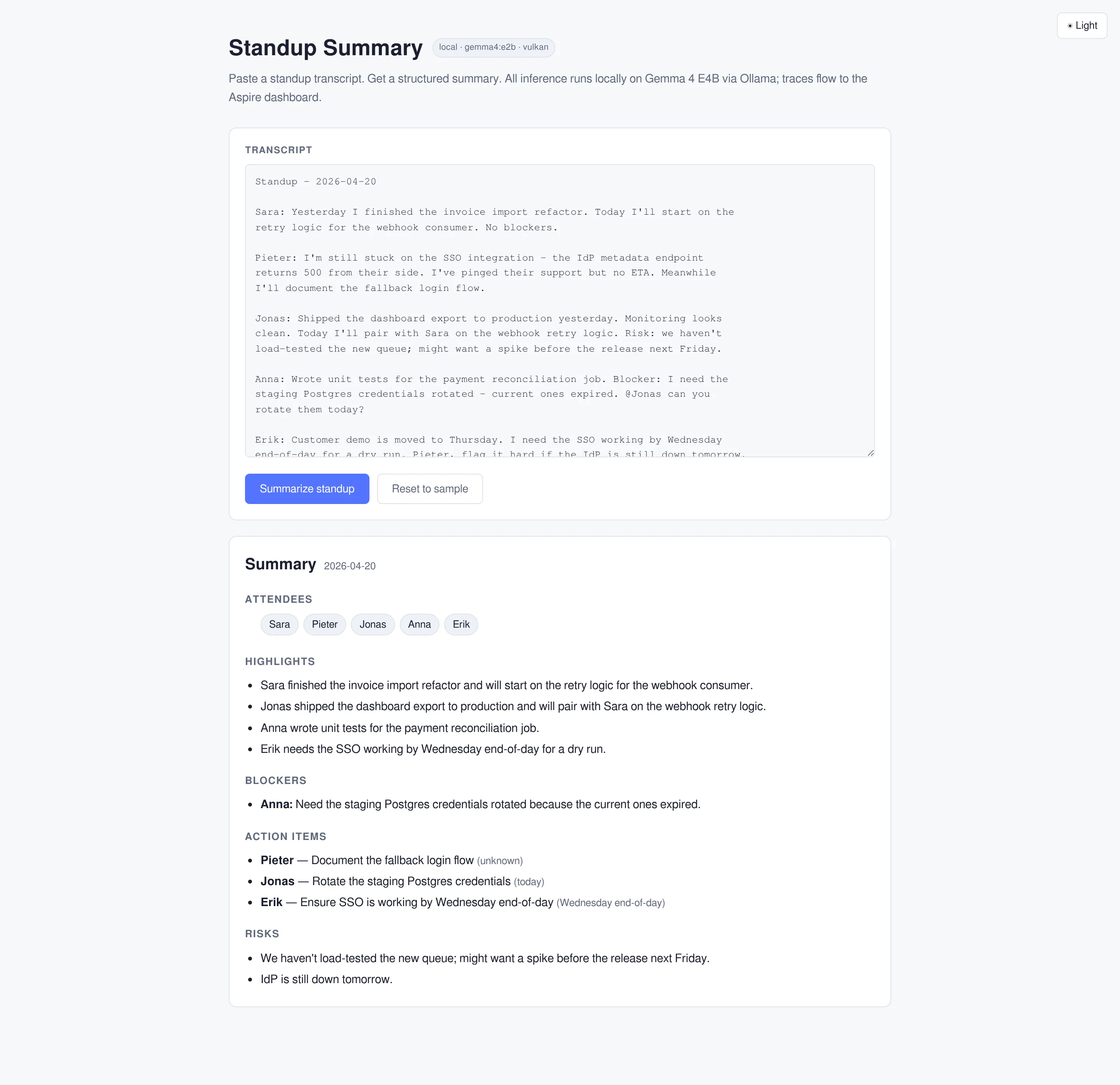

To demonstrate this functionality I’ve built a small standup summarizer, just a small app that performs a call to your local LLM.

What the dashboard needs to render the view

Here’s the mechanism. If you understand this part, the code falls out almost automatically. The dashboard rendering hinges on spans that follow the OpenTelemetry GenAI semantic conventions. The important attributes are:

gen_ai.operation.name(chat,embedding, etc.)gen_ai.request.model— the model you asked forgen_ai.response.model— what actually respondedgen_ai.input.messages— the prompt, JSON-serialisedgen_ai.output.messages— the response, JSON-serialisedgen_ai.usage.input_tokens/gen_ai.usage.output_tokensgen_ai.response.finish_reasons

When a span shows up in the traces view carrying those attributes on the Experimental.Microsoft.Extensions.AI activity source, the dashboard adds the sparkles icon and renders the chat log popup.

IChatClient is the Microsoft.Extensions.AI abstraction for something you can send chat messages to. Azure OpenAI, Ollama, and local models all either implement it directly or adapt into it. The OpenTelemetry wrapper we care about only knows how to trace things shaped like an IChatClient, which is why almost everything in the rest of this post routes through that interface.

Microsoft.Extensions.AI already knows how to emit the GenAI span shape. Calling .UseOpenTelemetry() on a ChatClientBuilder wraps the client with an internal telemetry decorator that produces spec-compliant spans. The catch is that the wrapping is opt-in and the content attributes are off by default. That’s where people get stuck: they see an empty popup, assume the integration doesn’t work with their backend, and give up.

It does work. You just have to flip three switches.

Four things to wire

To earn the sparkles with a local model, you need:

- A hosting integration that runs the model backend as an Aspire resource and produces a connection string.

- An

IChatClientin the consuming service, wrapped withUseOpenTelemetry()and content capture enabled. - A tracing source registration in

ServiceDefaultsso the GenAI spans make it into your tracer provider. - A content-capture toggle, either via environment variable or via the

UseOpenTelemetryconfigure action. Without it, the popup shows up but the messages are blank.

Miss any one of the four and you get silently degraded output. Miss #3 and the spans never leave the process. Miss #4 and the popup is empty. Miss #2 and you get HTTP spans but no GenAI spans. Miss #1 and you’re hand-rolling container orchestration.

Let’s build them.

The hosting integration: Ollama as a first-class resource

Aspire has no Ollama integration out of the box. The Community Toolkit has one, and it’s fine for many cases, but the whole point of this post is showing you the shape so you can write your own for any OpenAI-compatible backend.

Start with a new class library referenced by the AppHost. The library needs Aspire.Hosting as a package reference. Define the resource:

using Aspire.Hosting.ApplicationModel;

namespace ScrumSummary.Hosting.Ollama;

public sealed class OllamaResource(string name)

: ContainerResource(name), IResourceWithConnectionString

{

internal const string PrimaryEndpointName = "http";

private EndpointReference? _primaryEndpoint;

public EndpointReference PrimaryEndpoint =>

_primaryEndpoint ??= new EndpointReference(this, PrimaryEndpointName);

public ReferenceExpression ConnectionStringExpression =>

ReferenceExpression.Create(

$"Endpoint={PrimaryEndpoint.Property(EndpointProperty.Url)}");

}Two things to notice. First, inheriting ContainerResource gets you all the container lifecycle plumbing for free. Second, implementing IResourceWithConnectionString means you can call WithReference(ollama) on any consuming project and Aspire injects a standard connection string, no bespoke wiring.

Now a child resource for a specific model. This gives you a distinct row in the dashboard per model and lets a single Ollama instance host several without duplicating containers:

public sealed class OllamaModelResource(string name, string modelTag, OllamaResource parent)

: Resource(name),

IResourceWithConnectionString,

IResourceWithParent<OllamaResource>,

IResourceWithWaitSupport

{

public string ModelTag { get; } = modelTag;

public OllamaResource Parent { get; } = parent;

public ReferenceExpression ConnectionStringExpression =>

ReferenceExpression.Create(

$"Endpoint={Parent.PrimaryEndpoint.Property(EndpointProperty.Url)};Model={ModelTag}");

}The connection string carries both the endpoint and the model tag. The consuming service gets everything it needs in a single configuration value.

IResourceWithWaitSupport is the marker that lets downstream resources WaitFor(model) and have the wait block on a specific state. That’s what you want for model readiness. The API shouldn’t start accepting requests before the model is pulled and ready.

Now the extensions, AddOllama() and AddModel():

public static class OllamaBuilderExtensions

{

private const string OllamaImage = "docker.io/ollama/ollama";

private const int OllamaPort = 11434;

public static IResourceBuilder<OllamaResource> AddOllama(

this IDistributedApplicationBuilder builder,

string name = "ollama")

{

var resource = new OllamaResource(name);

return builder.AddResource(resource)

.WithImage(OllamaImage, "latest")

.WithHttpEndpoint(

port: null,

targetPort: OllamaPort,

name: OllamaResource.PrimaryEndpointName)

.WithVolume($"{name}-models", "/root/.ollama")

.WithLifetime(ContainerLifetime.Persistent)

.WithHttpHealthCheck("/");

}

}Three details worth calling out.

WithVolume gives Ollama a persistent location for pulled models. Gemma 4 E2B is about 7 GB on disk. You do not want to re-pull it on every debug session.

WithLifetime(ContainerLifetime.Persistent) goes one step further: the container survives aspire stop, so even the startup time is amortised away on the second run.

Using docker.io/ollama/ollama instead of ollama/ollama matters for anyone using podman. Docker defaults search registries; podman doesn’t, and an unqualified name fails with a cryptic error. Fully qualifying the image avoids an easy 10-minute debugging rabbit hole.

One scope note on

WithHttpEndpoint: plain HTTP is fine when the consumer is on the same host talking to Ollama over a local container network — nothing crosses a physical LAN, so TLS wouldn’t add meaningful protection. Two caveats, though. First, the endpoint is published on a random host port by default, not just the container network, so any other process on the same machine (or co-tenant on a shared VM / Kubernetes node / WSL) can reach port 11434 and see your prompts. Second, the moment you point this resource at anything cross-host — a corporate gateway, another machine on the network —http://becomes a real data-plane leak and you want TLS and auth in front of it.

Pulling the model on resource ready

Ollama starts with an empty model store. After the container is healthy, you need to trigger a pull. Aspire has an event pipeline for exactly this kind of side-effect. Subscribe to ResourceReadyEvent on the parent and post to /api/pull:

public static IResourceBuilder<OllamaModelResource> AddModel(

this IResourceBuilder<OllamaResource> builder,

string name,

string modelTag)

{

var model = new OllamaModelResource(name, modelTag, builder.Resource);

var modelBuilder = builder.ApplicationBuilder

.AddResource(model)

.WithParentRelationship(builder.Resource)

.WithInitialState(new CustomResourceSnapshot

{

ResourceType = "OllamaModel",

Properties = [new ResourcePropertySnapshot("Model", modelTag)],

State = new ResourceStateSnapshot("Waiting", KnownResourceStateStyles.Info)

});

builder.ApplicationBuilder.Eventing.Subscribe<ResourceReadyEvent>(

builder.Resource,

async (evt, ct) => await PullModelAsync(evt, model, ct));

return modelBuilder;

}The pull itself is a plain HTTP call. Aspire exposes the resolved host and port via the endpoint reference:

private static async Task PullModelAsync(

ResourceReadyEvent evt,

OllamaModelResource model,

CancellationToken ct)

{

var logger = evt.Services

.GetRequiredService<ResourceLoggerService>()

.GetLogger(model);

var notifications = evt.Services

.GetRequiredService<ResourceNotificationService>();

var endpoint = model.Parent.PrimaryEndpoint;

using var http = new HttpClient

{

BaseAddress = new Uri($"http://{endpoint.Host}:{endpoint.Port}"),

Timeout = TimeSpan.FromMinutes(30)

};

// Dashboard shows "Pulling {tag}"

await PublishState(notifications, model, $"Pulling {model.ModelTag}", KnownResourceStateStyles.Info);

using var response = await http.PostAsJsonAsync(

"/api/pull",

new { model = model.ModelTag, stream = false },

ct);

response.EnsureSuccessStatusCode();

// Dashboard shows "Running" (success style) — WaitFor on this resource now unblocks

await PublishState(notifications, model, KnownResourceStates.Running, KnownResourceStateStyles.Success);

logger.LogInformation("Model {Model} ready", model.ModelTag);

}

private static Task PublishState(

ResourceNotificationService notifications,

IResource resource,

string state,

string style) =>

notifications.PublishUpdateAsync(resource, s => s with

{

State = new ResourceStateSnapshot(state, style)

});The ResourceNotificationService updates are the important part. They drive the state shown on the dashboard row, so the user sees “Pulling gemma4:e2b” while the download runs and “Running” when it lands. The WaitFor on the dependent API picks up the state transition automatically.

One gotcha worth mentioning because it cost me an hour the first time: do not call .WaitFor(builder) on the model builder itself pointing at its own parent. Aspire throws “The ‘X’ resource cannot wait for its parent ‘Y’.” Parent relationships are handled via WithParentRelationship, not WaitFor, and the ResourceReadyEvent subscription is how you sequence the pull.

Wiring it up in the AppHost

With the integration in place, the AppHost becomes almost boring:

using ScrumSummary.Hosting.Ollama;

var builder = DistributedApplication.CreateBuilder(args);

var ollama = builder.AddOllama("ollama");

var gemma = ollama.AddModel("gemma", "gemma4:e2b");

builder.AddProject<Projects.ScrumSummary_Api>("api")

.WithReference(gemma)

.WaitFor(gemma)

.WithEnvironment("OTEL_INSTRUMENTATION_GENAI_CAPTURE_MESSAGE_CONTENT", "true");

builder.Build().Run();One environment variable is doing real work there. OTEL_INSTRUMENTATION_GENAI_CAPTURE_MESSAGE_CONTENT=true tells the OpenTelemetryChatClient to put the actual prompt and response into span attributes. Without it, the spans still fire, the sparkles still appear, but the chat log is empty. You get a visualiser with no content.

Keep it on in dev, off in production. Captured prompts and responses become a new class of data inside your telemetry backend: whatever users typed (PII, health data, source code, credentials they pasted in) and whatever the model said back, inheriting the retention and access-control surface of your tracing system — which is usually looser than your primary data store. That’s a GDPR Article 30 conversation waiting to happen: subject-access and erasure requests now hit the trace store, SIEM ingestion picks it up, and “dev” environments often contain production-shaped data. Capture liberally while prototyping; turn it off (or put a redaction processor in front of the exporter) anywhere else.

The IChatClient: making an OpenAI-compatible local model look like Azure OpenAI

Ollama exposes an OpenAI-compatible endpoint at /v1/chat/completions. That’s the trick that makes the rest cheap. You point the official OpenAI .NET SDK at that endpoint, wrap its chat client as an IChatClient, and wrap that with UseOpenTelemetry. From your code’s perspective it looks exactly like Azure OpenAI: same interface, same span shape. Behaviour diverges in corners (tool calls, streaming finish reasons, the usage block) — more on that later.

In the consuming service:

using System.ClientModel;

using Microsoft.Extensions.AI;

using OpenAI;

var builder = WebApplication.CreateBuilder(args);

builder.AddServiceDefaults();

var cs = builder.Configuration.GetConnectionString("gemma")

?? throw new InvalidOperationException("Missing connection string for 'gemma'.");

var parts = cs.Split(';', StringSplitOptions.RemoveEmptyEntries | StringSplitOptions.TrimEntries)

.Select(p => p.Split('=', 2))

.ToDictionary(p => p[0], p => p[1], StringComparer.OrdinalIgnoreCase);

var endpoint = new Uri(parts["Endpoint"].TrimEnd('/') + "/v1");

var modelId = parts["Model"];

builder.Services.AddSingleton<IChatClient>(_ =>

{

var openAi = new OpenAIClient(

new ApiKeyCredential("ollama"),

new OpenAIClientOptions { Endpoint = endpoint });

return new ChatClientBuilder(openAi.GetChatClient(modelId).AsIChatClient())

.UseOpenTelemetry(configure: c => c.EnableSensitiveData = true)

.Build();

});Four lines of interest.

new ApiKeyCredential("ollama") is a placeholder. The OpenAI SDK requires a credential value; Ollama doesn’t validate it. Pass anything non-empty and move on.

AsIChatClient() is the bridge between the raw OpenAI SDK type and the Microsoft.Extensions.AI abstraction. Everything downstream speaks IChatClient.

UseOpenTelemetry is the thing that creates the GenAI spans. Without this line, you get HTTP spans (because HttpClient instrumentation is on by default in ServiceDefaults) but no chat {model} span, and no sparkles.

EnableSensitiveData = true and the OTEL_INSTRUMENTATION_GENAI_CAPTURE_MESSAGE_CONTENT environment variable gate different layers: the flag is read by Microsoft.Extensions.AI’s telemetry wrapper, the env var is read by the underlying OTel GenAI instrumentation. They’re not pure aliases. Setting both covers you across package versions while the exact split shakes out in the preview APIs.

One caveat worth naming: the

IChatClientregistered above holds aHttpClientcreated by the OpenAI SDK directly, not one sourced fromIHttpClientFactory. Fine for localhost development; not what you want in production, where you lose DNS refresh, handler lifecycle, and the ability to compose aDelegatingHandlerpipeline for auth or retries. When you point this at anything other than a local container — a corporate gateway, a cluster-internal endpoint, Azure OpenAI in staging — switch toAddOpenAIClientwith aConfigureHttpClient-style factory or pass your ownHttpClientviaOpenAIClientOptions.Transport.

Using it in a handler looks exactly like Azure OpenAI:

app.MapPost("/summarize", async (

SummarizeRequest request,

IChatClient chat,

CancellationToken ct) =>

{

var prompt = $$"""

Summarize this standup transcript. Respond with one JSON object.

Schema:

{

"attendees": ["name"],

"highlights": ["sentence"],

"blockers": [{ "owner": "name", "description": "sentence" }],

"actionItems": [{ "owner": "name", "task": "sentence", "due": "when" }]

}

Transcript:

{{request.Transcript}}

""";

var response = await chat.GetResponseAsync<StandupSummary>(

prompt,

new ChatOptions { ResponseFormat = ChatResponseFormat.Json },

cancellationToken: ct);

return Results.Ok(response.Result);

});GetResponseAsync<T> does the JSON-to-type deserialisation. StandupSummary is a plain record (record StandupSummary(string[] Attendees, string[] Highlights, Blocker[] Blockers, ActionItem[] ActionItems)) with child records for Blocker and ActionItem. Setting ResponseFormat = ChatResponseFormat.Json tells the backend to force valid JSON output, which smaller local models appreciate, because they tend to wander off into markdown fences or prose prefixes otherwise.

The ServiceDefaults change everybody forgets

The default Aspire ServiceDefaults template gives you an OpenTelemetry tracer provider, but it only subscribes to a handful of activity sources: the application name, ASP.NET Core, HttpClient. Microsoft.Extensions.AI publishes to an activity source called Experimental.Microsoft.Extensions.AI. It is not in the default list.

That’s the #3 gotcha. If you skip this change, the spans are created but they’re never exported to the dashboard. You end up staring at a clean Traces view wondering why nothing is there. Add the source explicitly:

builder.Services.AddOpenTelemetry()

.WithTracing(tracing =>

{

tracing.AddSource(builder.Environment.ApplicationName)

.AddSource("Experimental.Microsoft.Extensions.AI")

.AddSource("OpenAI.*")

.AddAspNetCoreInstrumentation(/* ... */)

.AddHttpClientInstrumentation();

});"OpenAI.*" catches the OpenAI SDK’s own activity sources, which emit complementary spans around the raw HTTP layer. Not strictly required for the dashboard GenAI view, but useful context when you’re debugging: you see both the semantic chat span and the underlying HTTP call it generated.

This single line of code is the difference between “nothing in the dashboard” and “everything in the dashboard.”

Seeing it work

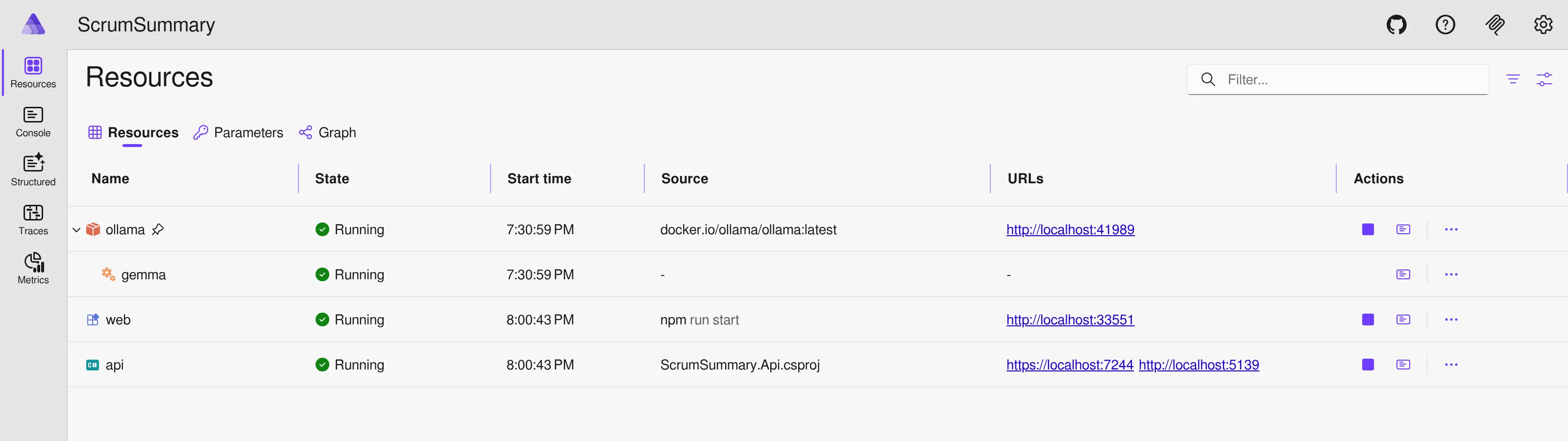

With those four things in place, click Summarize and watch the dashboard. The Resources view shows everything healthy — the Ollama container, the Gemma model, the API, and the web frontend:

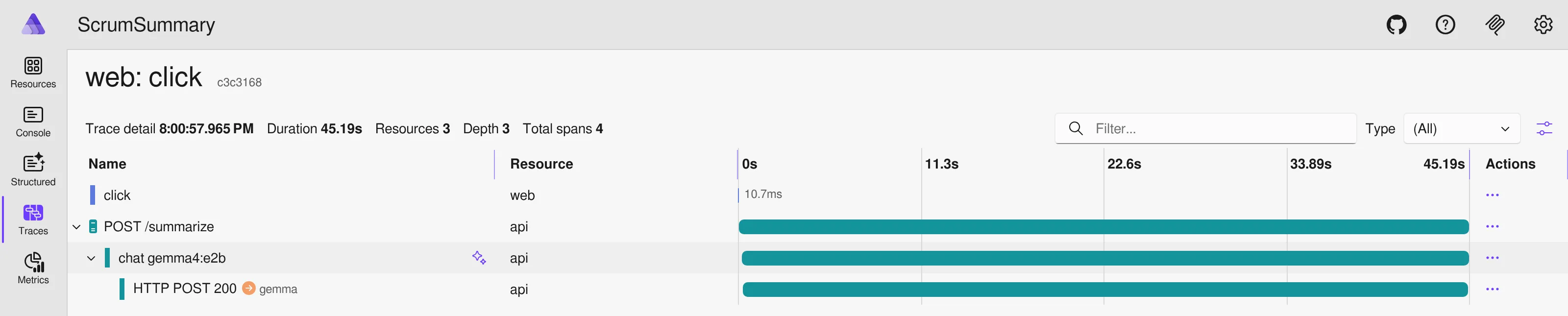

The trace waterfall for a summarize call threads from the browser through the API into the LLM, with the sparkles icon on the GenAI span.

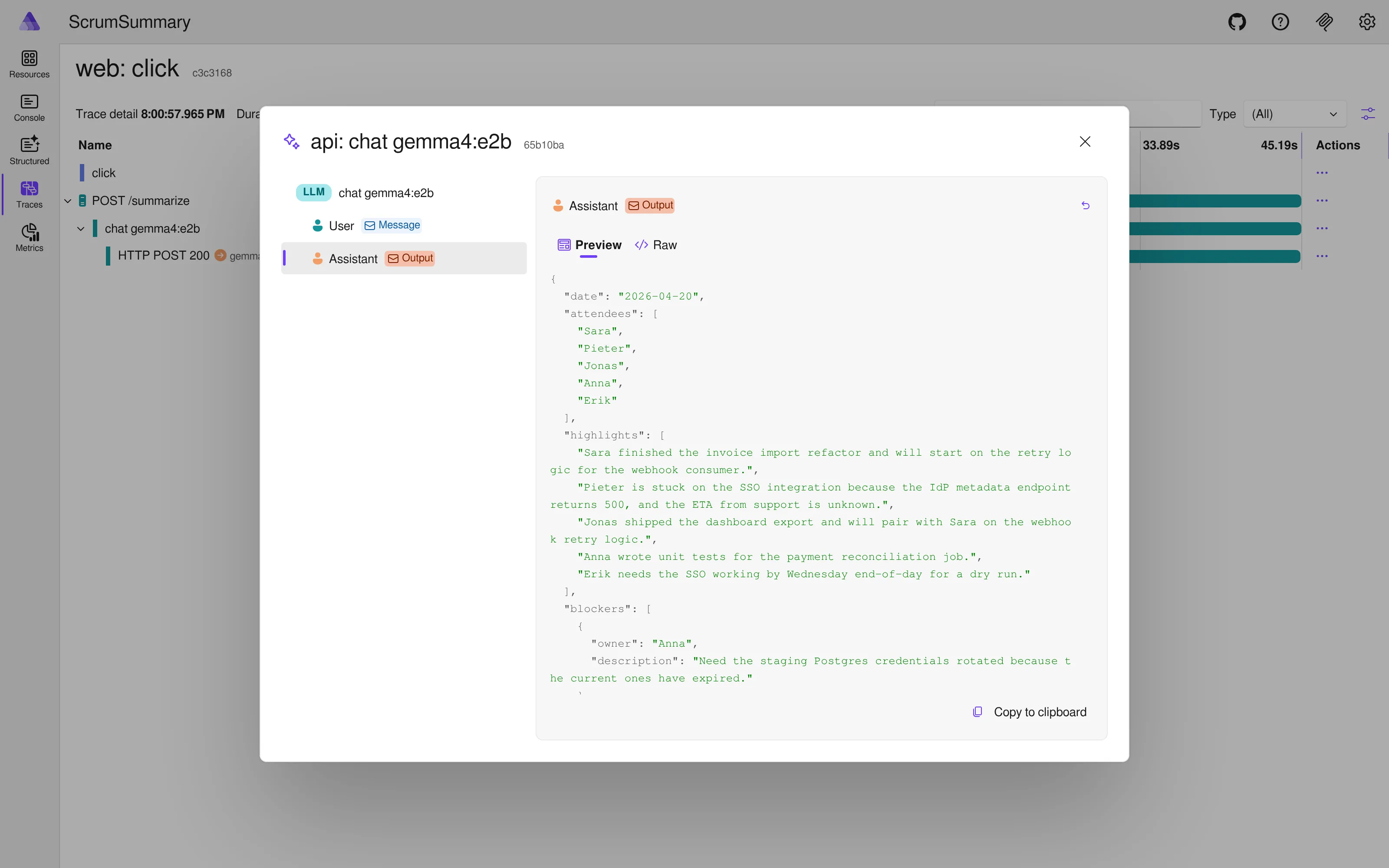

Click the sparkles and the chat log opens with two collapsible entries: User and Assistant.

Expand Assistant and you see the exact JSON Gemma returned, alongside token counts and the finish reason. (Expanding User shows the full prompt you sent — system instructions, schema, transcript.)

Nothing in the visualiser knows or cares that the backend is a local container. It sees gen_ai.input.messages and gen_ai.output.messages on a span with the right scope name and renders. The model could be running on your laptop, in a sidecar on a Kubernetes cluster, or behind a corporate LLM gateway. As long as the attributes make it out, the view is the same.

The raw attributes on the span, for a run against the sample transcript:

gen_ai.operation.name=chatgen_ai.request.model=gemma4:e2bgen_ai.response.model=gemma4:e2bgen_ai.provider.name=openaigen_ai.usage.input_tokens= 382gen_ai.usage.output_tokens= 278gen_ai.response.finish_reasons=["stop"]

One of these is a small lie: gen_ai.provider.name says openai because we went through the OpenAI SDK, not because the backend is. Cosmetic for the popup (the dashboard doesn’t filter on provider name), but worth knowing when you’re stitching together a multi-backend observability story.

Swapping Ollama for anything else

This pattern isn’t specific to Ollama. Anything speaking OpenAI-compatible chat completions plugs in with a single-line change to the AppHost. LM Studio on a developer workstation, vLLM serving a fine-tuned model in-cluster, a corporate LLM gateway on the internal network — same shape, same four pieces, same popup at the end.

Three variables to parameterise:

- The base URL.

- The model identifier.

- The auth mechanism (API key, bearer token, or a

DelegatingHandleron the OpenAI SDK’sHttpClientfor anything exotic).

Caveats worth saying out loud. Not every OpenAI-compatible server behaves the same. Ollama, vLLM, and LM Studio all have small divergences in how they emit usage tokens, handle response_format, and implement tool calls. Corporate gateways are where “just pass any string for the API key” stops being honest advice: custom auth, request signing, or a header-injection proxy needs real wiring via OpenAIClientOptions.Transport. And if the server omits the usage block from its response, the sparkles popup loses its token counts — OpenAI SDK passes through what the server gives it.

The IChatClient side doesn’t change. It already takes a URL and a model name. The only code that moves is the container definition in the hosting integration, and for endpoints you don’t host yourself you can drop the container entirely and use an ExternalService resource that wraps an existing URL.

Wrapping up

Custom resource, IChatClient with UseOpenTelemetry, activity source in ServiceDefaults, content capture switched on. Get all four wired and you’re sitting on the same dashboard trace popup Microsoft ships for Foundry, against any model you want to run.

If you’re behind a data boundary, you now have logging parity with the hosted path without data leaving the environment. If you’re cost-sensitive, the AppHost takes two configurations (local Ollama for development, Azure OpenAI for production) and the consuming code and trace view stay identical across both.

Verified against Aspire 13.2.2, .NET 10 SDK, Microsoft.Extensions.AI 9.9, Microsoft.Extensions.AI.OpenAI 9.9-preview, OpenAI SDK 2.10.